Does Polling Still Work?

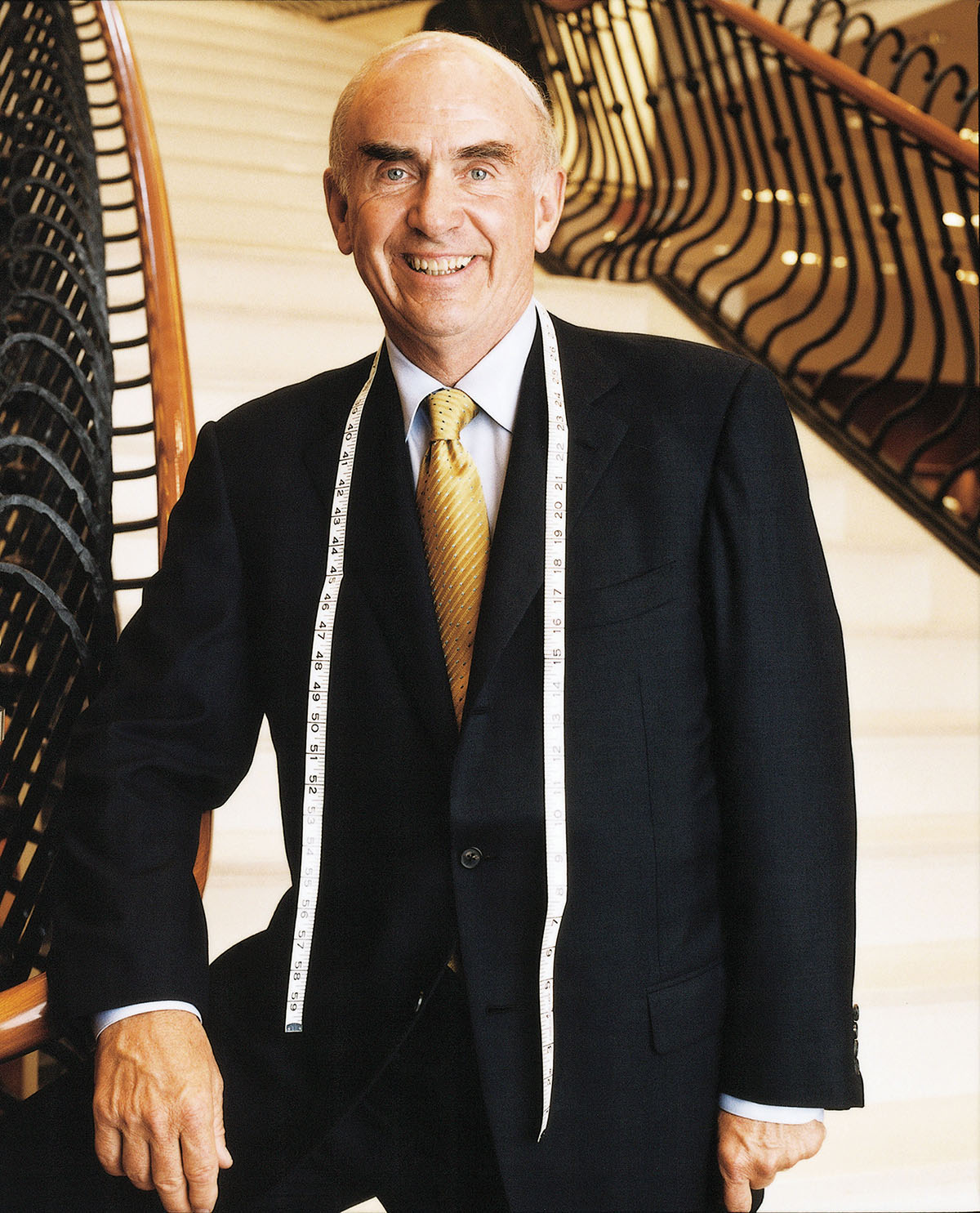

By Assistant Professor of Government Steven T. Moore

Surveying public opinion on political and cultural issues has become a big part of the election cycle. But how accurate is polling these days? Assuming you ask a truly random sample of Americans, their answers should reflect those of the entire country—until they don’t, as was the case for many pre-election poll results in 2016 and 2020.

Contrary to popular belief, national election polls during the last two presidential elections nearly captured the overall vote split. However, because of the electoral college, forecasters needed to estimate the results of 50 different state-level contests—an incredibly difficult task—allowing for considerably more errors. Why was this so problematic in 2016 and 2020? The decreased use of landlines is partly to blame. For most of the last century, Americans were much easier to reach. While more work clearly needs to be done to contact harder-to-reach populations, their absence in polling responses is less of a problem at higher levels of data aggregation.

What’s really to blame for the decline in state-level polling accuracy?

- American’s opinions on policy issues are unstable. Irrelevant factors can lead to large shifts in opinion. Many Republicans respond more favorably to the Inflation Reduction Act if they are not first reminded that it was passed under President Biden. The cue of who passed the bill can activate a more partisan lens that views the exact same policy very differently.

- Partisanship has become a big predictor of how the economy is judged, with the president’s co-partisans generally viewing the economy as strong. Republicans use inflation—which is higher than it’s been in decades—to judge President Biden’s performance, and Democrats focus more on unemployment—which is at its lowest point in decades.

- Many Americans don’t know that much about politics. You don’t need to be able to name every cabinet secretary to make reasonable political choices but having a sense of different policy debates and where the parties stand on these issues is crucial. Asking serious questions about policy attitudes of people without such knowledge isn’t likely to produce serious answers. At best, they will repeat messages they might have heard from others. Sometimes they simply make up an answer on the spot.

This doesn’t mean we should abandon trying to assess public opinion. But we should be very careful about what we ask and how we make sense of it. Polls that show close races where the lead is smaller than the margin of error should be understood as a statistical tie. And we should provide context for those being surveyed to ensure they have the proper background to provide meaningful answers. But we must be careful not to cue partisanship or other key social groupings that could skew responses in a certain direction.

If we account for these pitfalls, polling can still provide invaluable information on what the public believes and how they will politically behave.

Assistant Professor of Government Steven T. Moore’s work focuses on attitudes around race and politics in American politics, and particularly how these attitudes shape public opinion, how they are reflected in news media, and how they shape meaningful political behavior. In Fall 2022 he launched a lab that will give students firsthand experience with survey research and an opportunity to use computational and applied data science to investigate questions and issues.

Assistant Professor of Government Steven T. Moore’s work focuses on attitudes around race and politics in American politics, and particularly how these attitudes shape public opinion, how they are reflected in news media, and how they shape meaningful political behavior. In Fall 2022 he launched a lab that will give students firsthand experience with survey research and an opportunity to use computational and applied data science to investigate questions and issues.

Read more about Professor Moore’s research in the Wesleyan Connection.